There's no such thing as a one-line-change

Let's talk about prediction

The Sydney Opera house is a fantastic example of our inability to predict. The budget was originally estimated at AUD 7M. It ended up costing AUD 102M. The project was finally delivered 10 years late and cost close to 14 times more than planned. Human beings are terrible at prediction.

Still not convinced? How about the fact that the entire mutual funds industry has historically performed worse than the benchmark portfolio?

However, the data shows that the vast majority of actively managed funds underperformed on this metric as well. Among domestic equity funds, while 90% have underperformed on the S&P Composite 1500 over the past 20 years, an even greater 95% did so on a risk-adjusted basis. (Source)

This is an entire industry of well-paid, highly educated professionals trying to predict which stocks will perform better than others. The data is crystal clear: they aren't any good at predicting.

Now stop and think about the predictions that you make on a daily basis. If you are a software engineer, chances are that you are being constantly asked to estimate the time it will take to deliver a given feature. How well would you score if there was a benchmark?

Everyone wants to be the hero

A serious bug just hit your production environment. Luckily, you use crash reporting software so you quickly find out about the issue. The PM asks: "When can we have a fix?". Dave, the brave (and young) developer stands up and says with a calm voice: "Easy... it's a one line change!". Everyone turns their head, amazed at Dave's ability to store large programs in his head. "Expect a fix in no time", he says.

A few hours pass. The bug hasn't been fixed. The PM asks again, now with a slight tone of preoccupation in his voice: "Any updates on the fix?". "Sorry", says Dave "it's taking longer than I expected".

A prediction with no margin for errors

Do you know what's worse than a prediction? A prediction with no margin for error. Dave's one-line-change statement is exactly that. He claimed that he could predict, with perfect precision, the change that would save the day. Guess what? He has a very high probability of not being right.

A bet with downside but no upside

Another way to think about Dave's one-line-change is as the following bet. Throw a biased coin with a low probability of being right. If he is right, the issue is solved quickly and Dave gets a pat in the back for doing his job. If he is wrong, his trust and reputation suffers.

This is a bet with negative expected return. It's not just that the odds are against him. If he does win, he wins very little. If he loses, he loses big-time. Play this game a few times and you will very soon find that people will lose trust in you. A quick and easy way to ruin your reputation.

There's no such thing as a one line change

Now, even if good ol' Dave is right and the change is actually just a single line of code, Dave will have to perform an additional series of actions to get the change to production. Here's a short list of what a typical team needs to do before getting any change into prod:

- Write tests

- Compilation

- Run tests

- CI pipeline

- Code review

- Deployment

Assuming that every step takes 5-10m on average to complete, even if the change takes 10s to write, the whole process will take 30m-60m to finish.

The wet bias: Communication is more important than accurate predictions

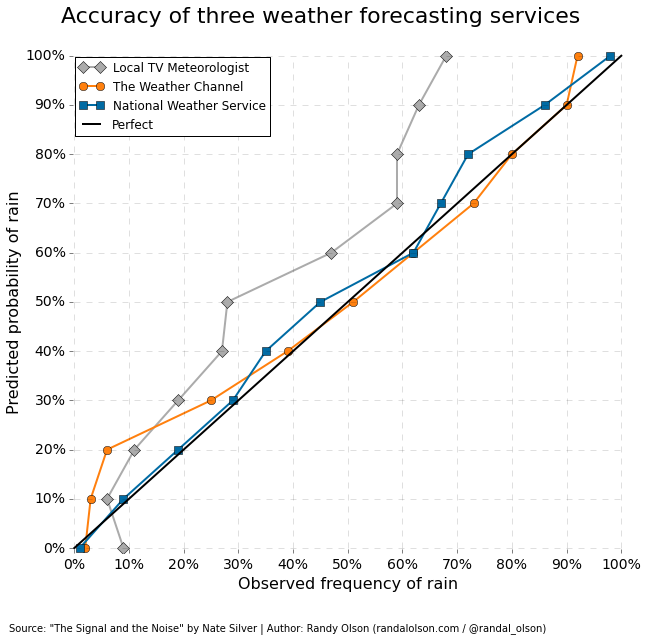

The wet bias is a phenomenon where weather forecasters will overestimate the chance of a rainy day. The reason is very simple: if they predict it's not going to rain and it does, people might have a terrible day at the beach. So they optimize for reducing the kind of errors that can harm their reputation.

Software development has similar mechanics. Take a page from the weather forecaster's book and communicate your predictions with wide buffers to allow for mistakes.

Conclusion

The next time you are asked to give an estimate, think about Dave. Realize that you are being asked to perform a task at which most humans are terrible at and act accordingly. Start by allowing for a decent margin for error. Never make a prediction with no space for mistakes.

Realize that even if you could get very good at estimation, you will always have the odds against you, so become a skeptic of your own estimates.

Many thanks to @timonbimon and @PetyakMi for reviewing and giving feedback.

If you found this article useful, consider following me on twitter. I write about once a month about software engineering practices and programming in general.

I'm also developing SynthQL. A type-safe HTTP client for PostgreSQL and I would love to get your feedback.

Other posts you may like...

Knowledge is like a house of cards

On the importance of building solid foundations. Read more.

Why read Dostoevsky? A programmer's perspective

On the limits of scientific knowledge and the importance of reading the classics. Read more.

Always use [closed, open) intervals

A short note on the dangers of using [closed, closed] intervals. Read more.